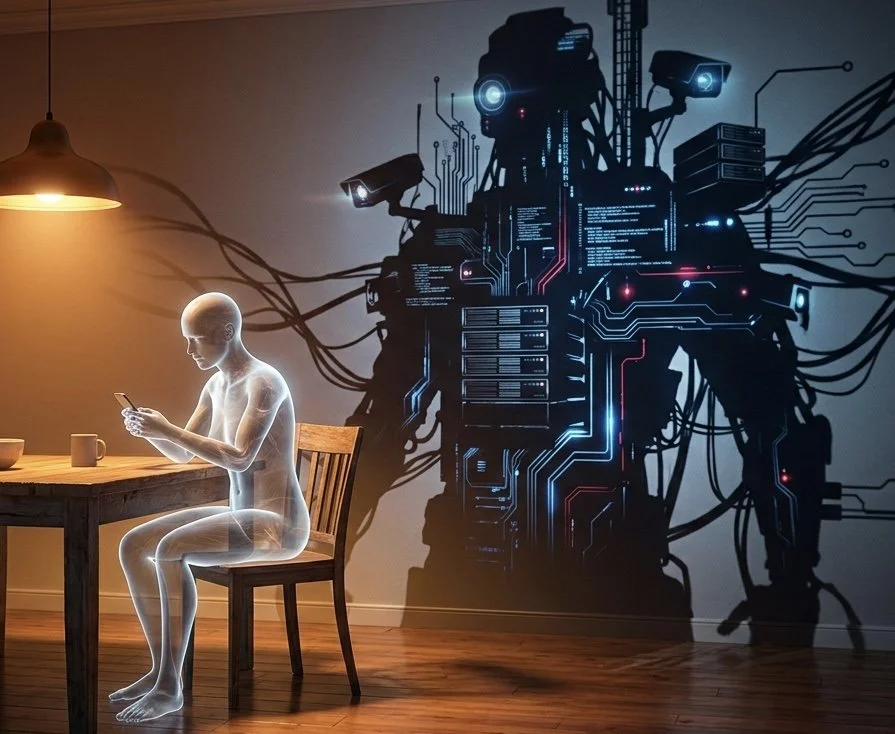

YOUR DIGITAL TWIN IS ALREADY ALIVE. YOU BUILT IT. They Just Own It.

TLDR

Data brokers and AI companies have already built detailed digital profiles on billions of people — without consent, without notification, and without any meaningful way to stop it. These profiles track your location, predict your behavior, clone your voice, read your mental health from your word choices, and will outlive you. Under U.S. law, you have almost no rights over any of it.

All you did was check the weather, order dinner, get directions, text your kid, and scroll for eleven minutes before bed.

That was enough.

Every single one of those moves just trained something.

Not you. Something about you. A version of you that lives in a server farm somewhere in a state you’ve never visited, humming quietly, getting smarter, and working for people you’ve never met.

Welcome to your digital twin. Whether you know it or not, right now, at this very moment, it is making decisions for you — which jobs you see, which ads follow you, which prices you pay, whether you’re a risk.

And for your kids — which shows they watch, which content finds them, which version of the world they’re being trained to believe is normal.

None of those choices belong to you. Or your children.

10,000 Data Points. Per Person. Billions of People.

Companies like Acxiom — rebranded to LiveRamp when the old name got too radioactive — openly brag about holding up to 10,000 data points on billions of people worldwide. That’s not a leak. That’s the product. That’s the pitch deck.

Your location history. Your purchase behavior. Your browsing patterns. Your device IDs, email addresses, cookies — all stitched together into what the industry calls an “identity graph.” One persistent profile. Updating constantly. Following you across every app, every site, every device, every time you do anything involving electricity.

You don’t opt into this. You just exist, and it happens.

A Company Built a Database of 30 Billion Faces. Yours Is Probably In It.

Clearview AI scraped 30 billion images from Facebook, Instagram, and the rest of the internet. The CEO admitted it. To the BBC. On camera. Without flinching.

Your face. Your friend’s face. Your kid’s face. Any photo you ever made public — and a lot you thought you hadn’t — is now a biometric data point in a facial recognition database being sold to law enforcement agencies across the country. Over 3,100 of them, at last count.

Nobody asked. Nobody knocked. They just took it.

And here’s the part that should keep you up: there is no delete button. Even the European regulators who fined Clearview millions of euros couldn’t make the photos disappear. Once you’re in, you’re in. The database doesn’t do refunds.

They Can Clone Your Voice in Three Seconds.

Not three hours. Not three minutes.

Three seconds of audio — a voicemail, a TikTok, a clip from a Zoom call — and Microsoft’s VALL-E system can synthesize your voice saying anything. Any sentence. Any tone. Any emotion. It even replicates background noise. If your sample sounds like a parking garage, the clone sounds like a parking garage.

Scammers already figured this out. People have already lost money because a voice that sounded exactly like their grandchild called them in a panic from a jail that didn’t exist.

The technology is real. The research is published. The fraud is documented.

They Know Where You’re Going Before You Do.

Your location data tells a story. Machine learning reads it. And the punchline is that AI models trained on GPS traces can predict your next location — your next point of interest, your routine, your deviations from that routine — with high accuracy, hours in advance.

You think you’re unpredictable. You’re not. You’re a pattern. You’ve always been a pattern. Now there’s software that can read the pattern faster than you can live it.

They Can See the Depression Coming. Three Months Early.

Research published in the Proceedings of the National Academy of Sciences — peer-reviewed, Stony Brook University and University of Pennsylvania — found that an algorithm analyzing Facebook posts could predict a depression diagnosis three months before a doctor made it.

Three months. From your word choices. From your use of first-person pronouns. From the particular way sadness leaks into language before the person using it has consciously admitted anything is wrong.

That’s not a dystopian novel. That’s a published academic paper from 2018 that nobody talked about at dinner.

Now imagine that same capability in the hands of an insurance company. Or an employer. Or anyone who can afford to buy behavioral data segments from a broker who doesn’t ask what you plan to do with it.

Deleting Your Account Changes Nothing.

You already thought of this. You’ve probably thought about going dark — deleting the apps, nuking the accounts, dropping off the grid in the small, symbolic way that modern life allows.

The FTC has news for you. Data brokers don’t care if you delete your Instagram. They hold historical data. They trade derived attributes. The profile doesn’t die when the account does. It just keeps updating from the other forty data streams you didn’t know were feeding it.

The opt-out mechanisms that exist — where they exist at all — are fragmented, incomplete, and riddled with downstream buyers who never received the memo and aren’t legally required to care.

And You Have Almost No Rights Over Any of It.

Under U.S. federal law, the inferences these companies draw about you — your financial risk profile, your health-related segments, your predicted behavior — are treated as their intellectual property. Not yours. Theirs.

You can’t see them. You can’t correct them. You can’t delete them. Outside of a handful of state laws in California, Texas, and Oregon, the answer to “what do you know about me and who did you sell it to” is: none of your business.

That’s not an exaggeration. That’s the current legal framework.

The Standard Comeback — And Why It Doesn’t Work Anymore.

Every time this conversation comes up, somebody says it: I’m not doing anything wrong, so I don’t have anything to hide.

It’s a good line. It’s also twenty years out of date.

The problem was never about hiding wrongdoing. The problem is that a system now exists that knows more about you than your doctor, your spouse, or your attorney — and you have no legal relationship with it, no access to it, and no recourse against it.

It’s not about guilt. It’s about power. Who has the information, who controls the narrative, and who gets to make decisions about your life based on a profile you never consented to, never reviewed, and can never correct.

The Twin Never Sleeps.

When you die, the profile continues. The voice clone lives on. The behavioral data gets archived. Companies already market digital legacy services that let your AI avatar keep interacting with people after you’re gone — not as you, but as whatever version of you the corporation that owns it decides to present.

And when you’re gone, it keeps going — edited, monetized, and managed by people who never knew you and never will.

Q&A

What is a digital twin?

A digital profile built from your data — location, purchases, browsing, behavior — that exists independently of you, updates continuously, and is owned by corporations you have never interacted with.

What is an identity graph?

A system that links your emails, device IDs, cookies, and location data into one persistent profile that follows you across every app and site you use.

Who is Acxiom and what do they know about me?

Acxiom, now LiveRamp, holds up to 10,000 data points per person on billions of people globally — location, purchases, demographics, browsing behavior — assembled without your direct consent and sold to third parties.

What is Clearview AI?

A facial recognition company that scraped 30 billion images from social media without consent and sells access to that database to over 3,100 law enforcement agencies.

Can I get my face removed from Clearview AI’s database?

No. Once ingested, the biometric faceprint cannot be removed in most jurisdictions. Illinois residents have limited opt-out rights. Everyone else has no meaningful recourse under current federal law.

What is voice cloning?

AI technology that replicates a person’s voice from a short audio sample. Microsoft’s VALL-E demonstrated this from just three seconds of audio, replicating tone, emotion, and background acoustics.

How are scammers using voice cloning?

By pulling short audio clips from social media or voicemails, cloning a family member’s voice, and calling victims claiming to be in danger. People have lost money to calls that sounded exactly like their child or grandchild.

Can AI predict where I will be in the future?

Yes. Machine learning models trained on GPS data can predict a person’s next location with high accuracy hours in advance, confirmed by multiple peer-reviewed studies.

Can social media predict mental illness?

Yes. A study published in the Proceedings of the National Academy of Sciences found that Facebook language analysis could predict a depression diagnosis up to three months before a doctor made it.

Who has access to mental health predictions derived from my data?

Anyone who can buy behavioral data segments from a broker. That includes insurers, employers, and advertisers. No federal law requires disclosure or consent.

Does deleting my accounts remove my data from brokers?

No. Brokers retain historical data and derived profiles regardless of whether you delete your accounts. Opt-out mechanisms are fragmented and do not cover most downstream buyers.

What legal rights do I have over my data broker profile?

Almost none at the federal level. Some protections exist in California, Texas, and Oregon, but they focus on registration and disclosure — not on giving you control over what has already been inferred about you.

What happens to my digital profile when I die?

It continues. Data is retained, traded, and in some cases used to power AI avatars that can interact with your family after you are gone — controlled entirely by the corporation that owns the data.

Sources: Acxiom/LiveRamp — Harvard Business School Digital Initiative; Consumer Watchdog “Data Stalkers” Report (2024); FTC Data Brokers: A Call for Transparency and Accountability; Clearview AI — CEO Hoan Ton-That, BBC interview (March 2023); Microsoft VALL-E — arXiv research paper (January 2023); DST-Predict Individual Mobility Prediction — PMC; Eichstaedt et al., Proceedings of the National Academy of Sciences (2018); EPIC Data Broker Report; Cozen O’Connor — New Data Broker Laws in Texas and Oregon (2024).